When the data is wrong

In the desire to be data-driven, you need to be asking for the data you don’t have.

Back in World War II, the allies were losing bombers over occupied Europe at an alarming rate. Pilots were known to talk about the ‘coin-toss’, the 50/50 chance that they would make it back alive from each mission, so high was the rate of loss. Large and relatively slow, their aircraft were extremely susceptible to the bullets of faster, more agile enemy fighters.

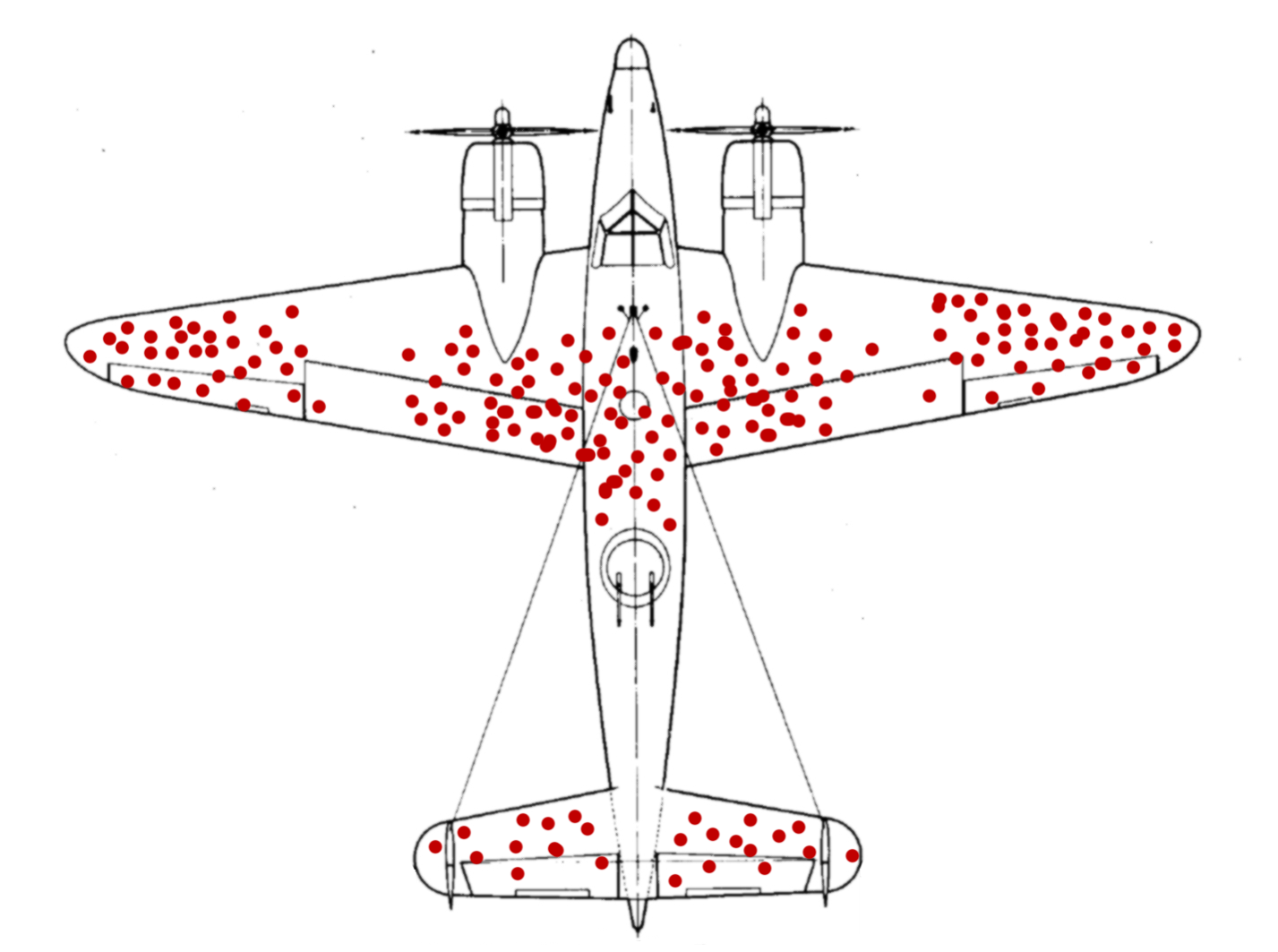

To address this, commanders set up a project to review returning aircraft and assess where most damage was being felt, with the aim of increasing survivability. The data was obvious. Most hits where being taken in the fuselage, the wing tips and the tail. These were all areas that could be reinforced with amour to provide additional protection to the aircraft.

But a Hungarian-Jewish statistician called Abraham Wald, identified a critical flaw in the analysis.

Only planes which survived had been analysed, not those which had been lost. In other words, they needed to reinforce the areas that were unscathed on returning aircraft, as these were almost certainly causing catastrophic loss. Anywhere where planes were making it back with bullet holes, meant the plane could survive such damage.

Wald’s analysis, which sounds obvious, is actually contrary to most of our thinking. Especially when we think under pressure. We apply survivor bias. We look at the data we have, not the data we don’t.

In marketing, it’s unlikely our data analysis is as critical as Wald’s. He’s credited with helping turn the tide of a war. But there are important lessons for us.

When we analyse our reach, conversions, even sales, we can easily apply the same survivor bias that Wald identified. We look at what we think we know for sure, while forgetting there is a whole world we don’t know.

An example of this bias happened to us with a major car manufacturer.

Sales-people were rating the likelihood of their prospects to buy, and following-up accordingly. And of those prospects followed up, a staggering percentage went on to buy a car. The sales-people’s assessment was accurate, the data proved it.

Only it didn’t.

Because an independent research company followed up with the people who were judged ‘time-wasters’ or unlikely to buy a car in the short-term. What did they find? Exactly the same proportion went on to buy a car. But either from another dealership or another brand.

The customers bought a car where there was follow-up. The sales-people were selling because they were following-up, not because of some mystic analysis ability.

We make these survivor bias judgments all the time. From choosing an action which we believe results in an outcome, to assessing a decision we took many years ago and crediting it with everything that happened since. Even though, we cannot know what the alternative data is.

In marketing and communications, we can research that alternative data.

We can ask: why didn’t you react, respond, buy; what could we have done differently; how did our control group without this stimulus react; who bought our product without seeing the campaign?

Always, always look for the data you don’t have, not just the data you do.

Be the Wald of your marketing or communications team.